声明:本文转载于CSDN,原文https://blog.csdn.net/qq_37608398/article/details/80217604

本文仅供大家进行python txt小说解析源码的学习,对于产生的后果本人不负任何责任,看小说请支持正版.

之前爬取笔趣阁小说都是单一的一本小说txt小说解析源码,爬取多本一般也是一本爬取爬取完成再爬取下一本txt小说解析源码,本节主要是消除这个弊端,利用多线程同时爬取多本小说,这种方式比较适合,用高性能服务器来爬取数据,这个主要技巧是在之前的爬取单本小说的基础上加上多线程技术,废话不多说,来点干货。

第一步txt小说解析源码:下载单本小说 这部分就不详细讲解了,具体查看txt小说解析源码我之前写的博客python3.6.5爬虫之一:笔趣阁小说爬取(首页爬取法)

第二步:分析整个笔趣阁网站结构 通过分析源码得知,笔趣阁网址结构为http://www.biquge.com/book/(number) http:网络协议,www.biquge.com:该网站域名(可以通过ping该域名得到该服务器ip地址),book:服务器根路径,number:相对路径(小说的唯一标识),这样看来,http://www.biquge.com/book/这些是固定写死的,后面的number对应每一本小说

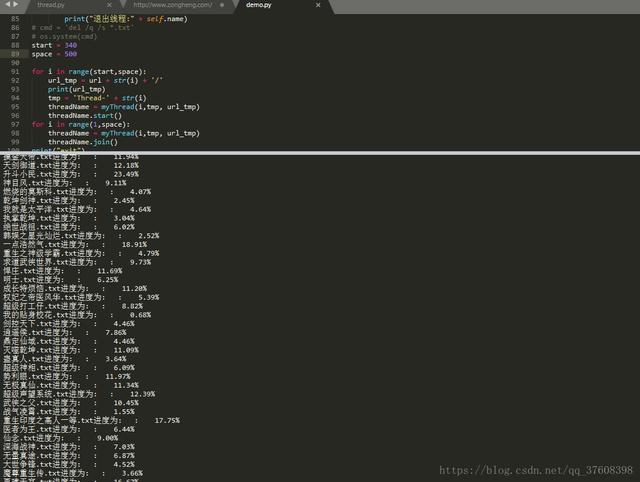

第三步:多线程下载 根据第三步的分析结果可知,改变请求地址中的number变量,就可以请求不同的小说,而且通过分析这个number值是从1开始递增的,中间没有缺少的(通过请求查到当前一共有37707本小说),将第一步的单本小说主函数加到多线程的函数中,设置number的范围,既可实现,多线程爬取多本小说。

效果图:

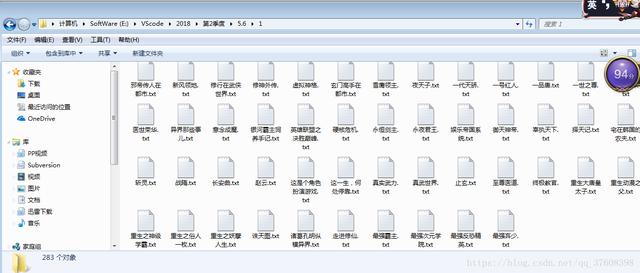

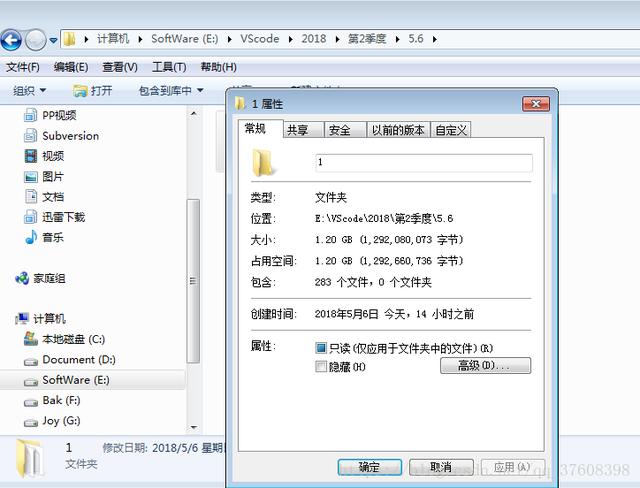

之前爬取的200多本小说(大约1.2Gb):

代码:

#coding:utf-8

import re

import os

import sys

from bs4 import BeautifulSoup

from urllib import request

import ssl

import threading

import time

# url = 'http://www.biqiuge.com/book/4772/'

# url = 'https://www.qu.la/book/1/'

url = 'http://www.biqiuge.com/book/1/'

url = 'http://www.biqiuge.com/book/' #多线程

def getHtmlCode(url):

page = request.urlopen(url)

html = page.read()

htmlTree = BeautifulSoup(html,'html.parser')

return htmlTree

#return htmlTree.prettify()

def getKeyContent(url):

htmlTree = getHtmlCode(url)

def parserCaption(url):

htmlTree = getHtmlCode(url)

storyName = htmlTree.h1.get_text() + '.txt'

print('小说名:',storyName)

aList = htmlTree.find_all('a',href=re.compile('(\d)*.html')) #aList是一个标签类型的列表,class = Tag 写入文件之前需要转化为str

# print(int(aList[1]['href'][0:-5]))

aDealList = []

for line in aList:

# line['href'] = url + line['href']

# print(line['href'])

chapter = int(line['href'][0:-5])

if chapter not in aDealList: #去重

aDealList.append(chapter)

aDealList.sort() #排序

# print(aDealList)

# print(len(aDealList))

# aDealList = str(aDealList)

urlList = []

for line in aDealList:

line = url + str(line) + '.html'

urlList.append(line)

# print(urlList)

return (storyName,urlList)

def parserChapter(url):

htmlTree = getHtmlCode(url)

title = htmlTree.h1.get_text() #章节名

content = htmlTree.find_all('div',id = 'content')

content = content[0].contents[1].get_text()

return (title,content)

def main(url):

(storyName,urlList) = parserCaption(url)

flag = True

# cmd = 'del ' + storyName

# os.system(cmd)

# cmd = 'cls'

count = 1

for url_alone in urlList:

lv = count / len(urlList) * 100

message = storyName + u'进度为: '

print('%-9s: %0.2f%%'%(message,lv))

f = open(storyName,'a+',encoding = 'utf-8')

(title,content) = parserChapter(url_alone)

tmp = title + '\n' + content

f.write(tmp)

f.close()

count = count + 1

exitFlag = False

class myThread(threading.Thread):

def __init__(self,threadID,name,url):

threading.Thread.__init__(self)

self.threadID = threadID

self.name = name

self.url = url

def run(self):

print('开始线程:' + self.name)

main(self.url)

print("退出线程:" + self.name)

# cmd = 'del /q /s *.txt'

# os.system(cmd)

start = 340

space = 500

for i in range(start,space):

url_tmp = url + str(i) + '/'

print(url_tmp)

tmp = 'Thread-' + str(i)

threadName = myThread(i,tmp, url_tmp)

threadName.start()

for i in range(1,space):

threadName = myThread(i,tmp, url_tmp)

threadName.join()

print("exit")

相关文章

本站已关闭游客评论,请登录或者注册后再评论吧~